|

I am a Machine Learning Researcher at Protege, where I lead research on spatial and physical intelligence within DataLab. Previously, I was a Senior Machine Learning Engineer at AlertWest, where I architected production AI systems for wildfire detection and monitoring. Before that, I was a Machine Learning Engineer and SME at Metal Toad, building cloud-based ML solutions on AWS. I completed my Masters in Computer Science at the University of Southern California as a National Science Foundation Graduate Research Fellow. I completed my Bachelors in Computer Science at Oregon State University. My research spans embodied and physical intelligence, multimodal learning, reinforcement learning, and explainable AI, with prior work at USC, Oregon State University's Personal Robotics Lab, and the United States Naval Research Laboratory. Email / Google Scholar / LinkedIn / X |

|

|

I'm always excited to discuss machine learning, research ideas, and innovative applications of AI. Whether you're interested in collaborating on a project, exploring new research directions, or just want to chat about the latest developments in ML, feel free to reach out via email or schedule a call. I'd love to hear from you! |

|

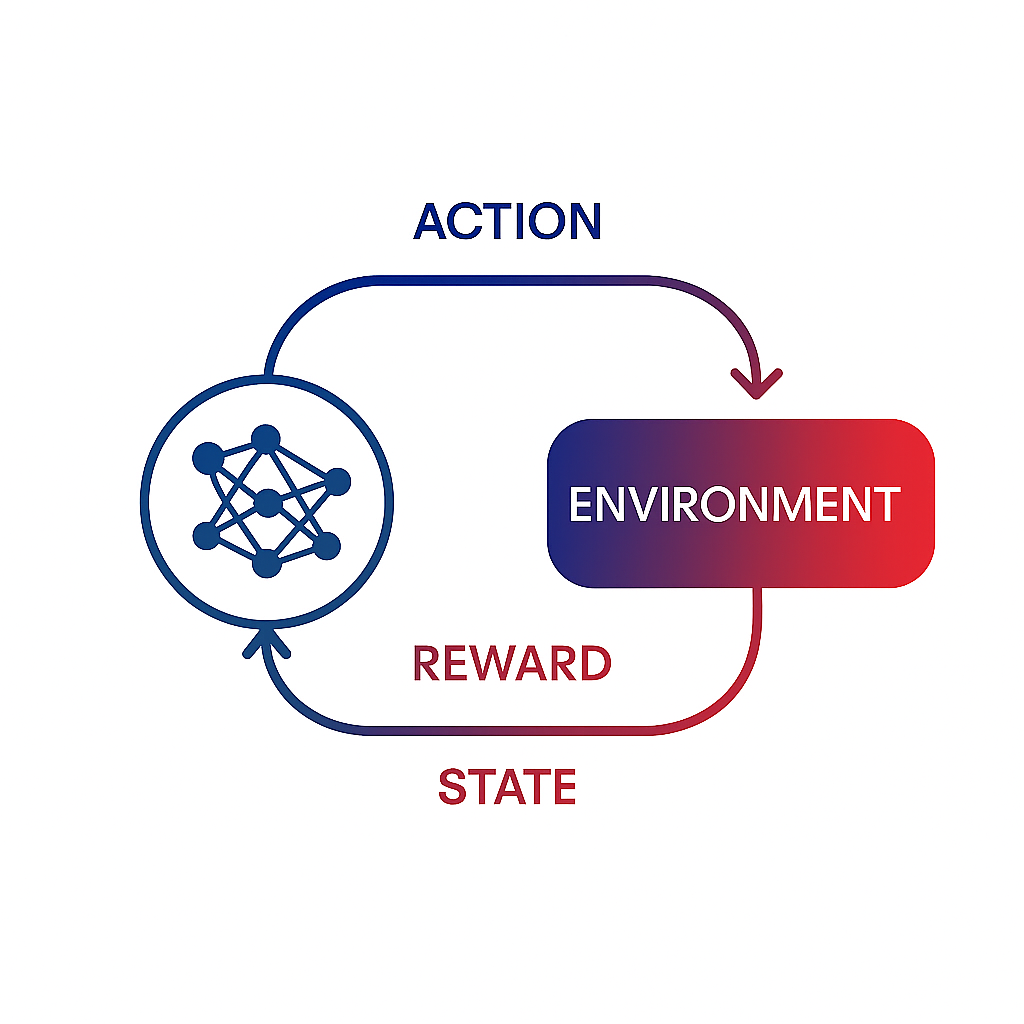

I lead the spatial and physical intelligence research vertical at Protege. My broader interests span world modeling, vision-language-action models (VLAs), vision-language models (VLMs), embodied AI, multimodal learning, and reinforcement learning. |

||||||

|

|

Outside of work, I enjoy pickleball, swing dancing (and teaching lessons), and rock climbing. |

|

Oregon State University Experience |

|

|

|

Rey Pocius DataLab at Protege, 2026 |

|

Rey Pocius, Naghmeh Zamani, Heather Culbertson, Stefanos Nikolaidis HRI Pioneers Workshop, HRI '20 Companion of the 2020 ACM/IEEE International Conference on Human-Robot Interaction, 591-593 |

|

William Curran, Rey Pocius, Bill Smart 2017 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2017), 1559-1565 |

|

Rey Pocius, David Isele, Mark Roberts, David W. Aha Thirty-Second AAAI Conference on Artificial Intelligence (AAAI-18), 8135-8136 |

|

Rey Pocius, Lawrence Neal, Alan Fern Thirty-Third AAAI Conference on Artificial Intelligence (AAAI-19), 10007-10008 |

|

Justin Clough, Patricia Chaffey, Gautam Salhotra, Colin G. Cess, Rey Pocius, Dr. Katie Mills 2020 ASEE Annual Conference and Exposition |

|

Robot-Assisted Hair Brushing |

|

I previously contributed to the BOTS (Building Opportunities with Teachers in Schools) program at USC, helping create scalable robotics and coding curricula for K-12 education. |

|

|